Security Serious Week 2020 focused on disinformation, and there were many talks, tweetchats, presentations, panel discussions, and blogs.

Security Serious Week 2020 focused on disinformation, and there were many talks, tweetchats, presentations, panel discussions, and blogs.

One of the panels which caught my attention was entitled “Manipulation by disinformation: How elections are swayed” and featured a stellar panel consisting of Jenny Radcliffe, aka The People Hacker, Human Factor Security; Rosa Smothers, Senior VP of Cyber Operations at KnowBe4 and former CIA threat analyst; Prof. Danny Dresner, Academic Cyber Security Lead at University of Manchester; Chad Anderson, senior security researcher at DomainTools. The discussion was moderated by Tony Morbin, editor of IT Security Guru and former editor of SC Magazine.

The topic of disinformation has been gathering more air time over the last couple of years, but it is still a somewhat misunderstood topic; in particular in how it can potentially impact elections. Below are some of the key points that were raised through the panel discussion.

Understanding Disinformation

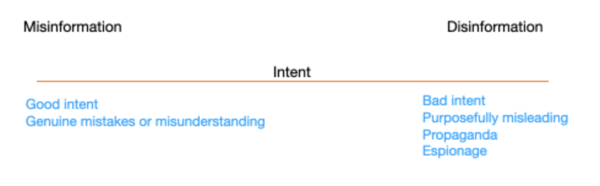

To start off with, it’s important to understand what disinformation actually is. There’s a difference between disinformation and misinformation; the key point being intent.

Misinformation can be a genuine mistake or misunderstanding and the intent is not to cause deliberate harm; whereas disinformation is purposefully misleading in a harmful way. It is the realm of where propaganda and espionage lie.

Not all disinformation is created equally. On a sliding scale, the absolute truth may be on one end, and a lie is on the other. But that isn’t necessarily where the most damage occurs. The most damaging are the areas in the middle, where either the truth is taken out of context, or a few lies are mixed in with the truth.

Not all disinformation is created equally. On a sliding scale, the absolute truth may be on one end, and a lie is on the other. But that isn’t necessarily where the most damage occurs. The most damaging are the areas in the middle, where either the truth is taken out of context, or a few lies are mixed in with the truth.

Media, Social Media, and Algorithms

Much of the discussion focussed on changes in the media landscape and how it has impacted our perceptions of the world. It was agreed that most of the world doesn’t rely on the traditional media as much as they used to, with more people getting their news from social media. To this point, the role of algorithms is huge. If the algorithm sees an individual is interested in a particular topic, it will keep on feeding those topics while increasingly becoming extreme.

A lot of the behaviour of algorithms can be boiled down to business models and ad revenue. The inclination is for social media platforms to maintain and drive users to the information they want to see. It speaks to human pride that they will go towards information that agrees with their biases -- as opposed to having algorithms that challenge their viewpoint.

Panelists believed that governments have some level of responsibility on holding social media platforms more accountable. It was said that governments are responsible to protect nations against both domestic and international threats. In doing so, they are also obligated to limit the power of huge monopolies so a couple of large corporations can’t control everything.

Alongside more stringent government oversight, panelists believed that simply restricting the power of social media platforms was not enough. In tandem, critical thinking should be put on the educational curriculum so that future generations can be taught how to distinguish between what is true and what is not.

Responsibility of Social Media Platforms

The third aspect was for social media platforms to be more proactive in identifying misinformation, whether that be flagging potential disinformation or linking back to the original source of the article. The platforms should also take stronger action against those who willingly spread disinformation, including banning persistent offenders and punishing in instances where laws have been broken.

Again, panelists believed that it’s an area that if the platforms would not do willingly, then maybe government regulation would be a route to be considered.

Defending Democracy

While there is no doubt that disinformation exists, the pressing question is whether or not it has impacted democracy. There is an element of the Streisand effect, in which the impact of disinformation grows bigger the more we discuss it. However, the panel did agree that we would be foolish to not think that disinformation doesn’t have an impact on the democratic process. It heavily impacts how people interpret events in their world and the level of trust they place in societal structures, which is one of the primary objectives of disinformation.

Technology is the tool being used to spread disinformation and impact the democratic process; but the panel suggested that while some technical measures could be taken to help reduce the impact, the real benefits would be in developing critical thinking skills amongst the youth in particular to help build a culture whereby individuals are better placed to discern for themselves what is true, what is not, and to understand the implications of disinformation. Whether that relates to elections or any other facet of their lives.

For a helpful resource on disinformation, check out my colleague and Chief Evangelist and Strategy Officer Perry Carpenter's on-demand webinar series for combating the global disinformation pandemic.