Analysis of malicious large language model (LLM) offerings on the dark web uncovers wide variation in service quality, methodology and value – with some being downright scams.

Analysis of malicious large language model (LLM) offerings on the dark web uncovers wide variation in service quality, methodology and value – with some being downright scams.

We’ve seen the use of this technology grow to the point where an expansion of the cybercrime economy occurred to include GenAI-based services like FraudGPT and PoisonGPT, with many others joining their ranks.

But new analysis of generative AI-based LLMs by security analysts at TrendMicro makes it clear that the claims about the capabilities and technologies behind these services often are more hype than reality.

Cybercriminals are leveraging these tools to develop malware quickly, improve social engineering tactics, create scripts, write emails and more.

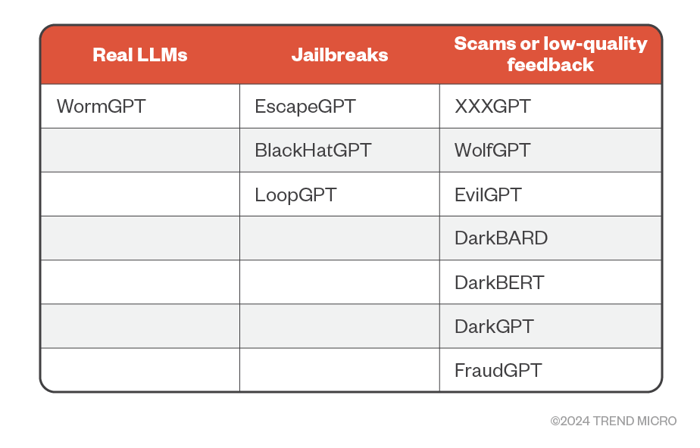

According to the analysis, not all LLM-based services are created equal. In a few cases, there is a legitimately unique malicious LLM created. In some cases, the service utilizes a version of ChatGPT, having figured a way around the current controls in place designed to keep the predominant AI from working on something malicious in nature.

And in still other cases, some services are just scams or low-quality services that won’t deliver, taking advantage of the cybercriminal’s desire to quickly launch an attack (demonstrating there still is no honor among thieves).

Source: TrendMicro

Despite the lack of quality LLM-based services today, the massive use of such services shows that there’s likely a number of criminal organizations that are investing in delivering something truly impactful that will consume the cybercrime market.

And when that happens, organizations are going to need more than just security solutions in place. You’re going to need vigilant users “standing at the (Inbox) gate” ensuring that, despite how believable email content may look, the recipient user keeps a scrutinizing eye out for anything suspicious, materially lowering the risk of a successful attack.

KnowBe4 empowers your workforce to make smarter security decisions every day. Over 65,000 organizations worldwide trust the KnowBe4 platform to strengthen their security culture and reduce human risk.