Vint Cerf – Photo by Charles Haynes

The Internet really still is in beta.

Vint Cerf, who together with Bob Kahn created TCP/IP, the protocol that routes Internet traffic, is often called the "father of the Internet". Cerf was interviewed about TCP/IP recently in a book called Fatal System Error and admitted “We never got to do the production engineering.” In software design language that essentially means "we never got out of beta". You can imagine what that means, some crucial features that really were needed never made it in the protocol that the world runs on now.

Cerf explained: “My thought at the time, thirty-five years ago, was not to build an ultra-secure system, because I could not tell if even the basic ideas would work." The focus at the time, as it was an ARPANET Defense project, was on fault tolerance, not security. The message was supposed to automatically reroute network packets around atomic bomb blast sites, not protect you against identity theft or keep hackers out of your network.

Cerf has stated many times over, the only way the Internet is going to be truly secure is to rebuild it from the ground up. That is difficult, but can it be done? Absolutely. But it's going to first take worldwide agreement, next a lot of time, and then a lot of money. And looking at the state of the planet, it's doubtful if this ever will be done. Especially as some powerful players prefer things to stay as they are because they have access to everything now.

How did it get to be this bad?

There actually are security technologies that are far superior to what is being used today. So how come they are not being deployed in production? The answer has to do with people, not technology. Hackers and the NSA are far ahead in their offensive technology, they can essentially break into any piece of code. And then there is that pesky end-user. Machine-to-machine communications are easy, but once you throw in hundreds of workstations, dozens of servers, and thousands of devices and apps that are being driven by humans, things get very hairy, very quick. Fancy mathematical security algorithms start to break down or get overwhelmed.

How does NSA Monitoring fit with all this?

T-shirts have appeared on CafePress with the following text: "NSA, the only part of government that actually listens". I own one, because this is the real situation at the moment. As Cerf stated, the basic transmission protocols were not built with security in mind. This allows organizations with access to centralized Internet traffic hubs to "hoover" up the data they deem critical. The problem with that is if someone can do it, others might follow soon after and the genie is totally out of the bottle. The NSA claims that they only "touch" only 1.6% of daily internet traffic. If the net carries 1,826 petabytes of information per day, then the NSA "touches" about 29 petabytes a day. IT pros are very interested in what that actually means. Analyze? Store? For how long?

The long-term effects of this type of monitoring

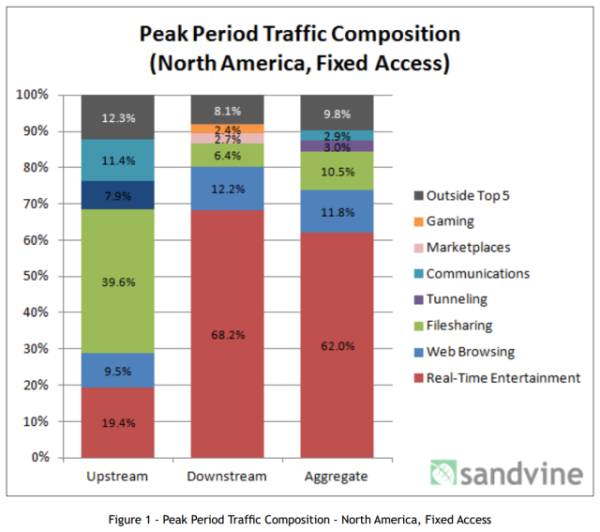

Let's dig a little bit deeper into that 1.6% the NSA claims they touch. First of all, the far majority of data traffic is not email or web pages. It’s 62% real-time entertainment. if you look at the Sandvine numbers for the U.S. (graph below) followed by P2P file-sharing for 10.5%, which is also (mostly illegal) movies being shared. So the NSA is not looking at 72.5% of the data.

Web traffic is only 11.8% of aggregated up- and download traffic in the U.S. as per Sandvine. Actual communications is just 2.9%. And so do the math, NSA’s 1.6% of net traffic would be roughly half of the actual email traffic on the net. One more thing, about 80% of email traffic is spam which I am sure they are filtering out. In other words, it is highly likely they "touch" all email traffic in the U.S.

Potential threats it poses to personal information and privacy

Now, seen the fact that the NSA is building a huge faciltity in Utah, where they can store this type of information indefinitely, you see the risks. Government organizations simply have a bad record regarding data breeches, the last few years have been showing an abundance of records being stolen by a variety of hackers.

Recent surveys show that U.S. citizens are very concerned about their financial stability and government records falling in the wrong hands. The recent hack of millions of tax-payer records in South Carolina are a good example. If one's personal confidential records are freely bought and sold in a flourishing criminal underground economy, there is possible exposure for many years in the future.

But that is not all. Once a government agency has say 10, 15, 20 years of your personal email stored, they can run algorithms that show a wealth of private information. For instance, there already are Artificial Intelligence software applications that looking at your email can closely approximate:

- Your political convictions;

- Sexual orientation;

- Degree of agreement with current administration;

- Potential for violence;

- Potential for anti-social behavior;

- and any other specific custom trait they are looking for

The potential for abuse is horrifying. The recent IRS scandals where they seek out specific organizations and treat them differently based on their political leaning is child's play compared what the NSA can come up with for each one of us individually when they store our personal email for decades. You might think, oh well, we will send everything with strong open source encryption. Not so fast. First, the NSA has broken most encryption already and second, they can simply store that encrypted data for later when they have broken the encryption and then go back to their archive and decrypt the communication you thought was safe. More over, encrypted data stands out like a sore thumb, so if you encrypt your email, that paints a bright red target on your back from the get go. I am sure you can imagine the ease with which the 4th amendment can be swept under the rug this way.

The old way does not cut it anymore

Remember the old days when security standards were created by a large body of experts? Government in cooperation with large companies threw a lot of resources at it, and 24-36 months later we had a new standard. But now, with large amounts of money being paid for 0-day vulnerabilities, hackers make security standards obsolete even before they come out. The game has changed completely. There are dozens if not hundreds of 0-day vulnerabilities in almost all applications and the NSA has many of these in their bag of tricks.

The new way is not here yet

You have to understand that there is a fundamental difference between cybersecurity and almost any other technology. The more people know about cybersecurity, the less secure you are. People have a choice to become a white hat or a black hat, and at the moment the dark side pays a lot more. Sometimes in Eastern European countries, the dark side is the only game in town. Continuing to fix vulnerabilities with regular patching is at best a Band-Aid and an expensive one at that. There is always the risk that a hacker will get into your network before you can apply the patch. Not to mention 0-day vulnerabilities where there simply is no patch.

"Pay me now or pay me later"

You know the saying, and the time has arrived to pay the piper. For well over 40 years we have trusted code that was not designed to be secure. It has caught up with us, and it is time to start using defensive technology that will keep attackers truly out. It begins with a good look at your defense-in-depth and at the very least becoming a hard target to penetrate. Personally I would look at Application Control (a.k.a. whitelisting) which is a technology that turns the antivirus strategy on its head. Antivirus keeps the bad guys out. Application Control only allows known good code to run and denies everything else by default. It's a bit more work but a lot safer. Last but not least, immediately start with effective security awareness training because it's the end-users who are the low-hanging fruit that criminal hackers use to break into your network using social engineering tactics.