The U.S. government has been pushing people to avoid SMS- and voice call-based multi-factor authentication (MFA) for years, but their most recent warning is to avoid any MFA that is overly susceptible to phishing. That is only common sense (since most data breaches involve social engineering), but what MFA types do they mean and what does that mean for you? Read on.

The U.S. government has been pushing people to avoid SMS- and voice call-based multi-factor authentication (MFA) for years, but their most recent warning is to avoid any MFA that is overly susceptible to phishing. That is only common sense (since most data breaches involve social engineering), but what MFA types do they mean and what does that mean for you? Read on.

The U.S. Government Has Discouraged SMS- and Voice Call-Based MFA Since 2017

The U.S. has been pushing people to avoid SMS- and voice call-based MFA ever since it pushed out drafts of its new Digital Identity Guidelines, NIST Special Publication 800-63, which was finalized in 2017.

They declared it in SP 800-63-3, Part B by stating the “Use of the PSTN [Public Switched Telephone Network or a phone line connection in human-speak] for out-of-band [authentication] verification is RESTRICTED”. This means any authentication, including MFA that relies on your phone or phone number as part of its authentication, is “restricted”. This includes all SMS- and voice call-based MFA.

Note: This does not mean all MFA-based phone apps are restricted. Most phone apps require an additional authentication that is not reliant on or tied to a phone number. If the user’s phone number gets re-routed, the phone app does not go along with it and any thief installing the same phone app on their phone with your phone number will not be able to automatically use the app, logging in as you, without additional authentication.

What does restricted mean? It is defined in the same document at section 5.2.10. In short, it includes language that states that restricted authentication is very risky. They require that any governed organization using a restricted authentication method should understand it, accept the risks, and let any user who is using a restricted authentication method know that the solution is extra risky, and let the user choose at least one other non-restricted authentication solution.

While that requirement may happen at some government authentication solutions, SMS-based MFA is probably the most used and forced MFA method used on the Internet by tens of thousands of companies…companies you and I use…and never have they ever warned me that the SMS-based (or voice call-based) MFA method they are forcing me to use is extra risky.

The Digital Identity Guidelines finished its section on RESTRICTED MFA solutions by stating that vendors using restricted MFA solutions must “Develop a migration plan for the possibility that the RESTRICTED authenticator is no longer acceptable at some point in the future and include this migration plan in its digital identity acceptance statement.”

Now a Bunch of Other MFA Solutions Have Been Declared Persons Non Grata

Well, some future point in time has arrived and it, rightly, includes more types of MFA solutions which the government says vendors and people should not use.

President Biden’s recent executive order (EO 14028), asked all agencies to develop zero trust architectures, which most security experts welcomed. In a related clarifying memo it states, “For routine self-service access by agency staff, contractors and partners, agency systems must discontinue support for authentication methods that fail to resist phishing, such as protocols that register phone numbers for SMS or voice calls, supply one-time codes, or receive push notifications”

So, there you go. The U.S. government is telling its agencies, and really, the whole world, “Stop using any MFA solution that is overly susceptible to phishing, including SMS-based, voice calls, one-time passwords (OTP) and push notifications!” This describes the vast majority of MFA used today. There are no published figures on this, but I bet that over 90% of all MFA is susceptible to easy phishing.

How? Most MFA users can be tricked into revealing their MFA codes or into letting an attacker steal their access control token by simply clicking on the wrong link sent in a phishing email. The link sends the victim to a “proxy” server that then links the victim to the legitimate destination server the victim thought they were going to in the first place. But the proxy server is now capturing everything sent from the legitimate destination server to the victim; and vice-versa. This includes any login information: login name, password and any provided MFA codes. The attacker can even capture the resulting access control token cookie, which allows the attacker to take over the victim’s session.

Hacking OTP MFA Demo

Here is a great video on it by KnowBe4’s Chief Hacking Office, Kevin Mitnick. Screenshot of the video is below.

I think anyone who has not seen the video before will be shocked at how easy it is to phish around someone’s supposedly super secure MFA solution. This attack type does not work against all MFA solutions, but probably 80% to 90% of them (FIDO2 is one of the MFA types which that specific type of attack would not work with).

Bogus Recoveries

Any login method protected by susceptible MFA (SMS or OTP) can be tricked as easily using text messages. Here is an example where the attacker is spoofing that they are Google tech support to the victim. The attacker would have to know the victim’s Gmail address and phone number, but those are pretty easy to learn. In this fake attack example, the attacker is pretending to be Gmail or Google tech support. They send this SMS message.

Then the attacker goes to the victim’s Gmail account and types in the user’s email address to start the login process.

The attacker clicks on the Forgot password link provided by Gmail (and they really do not know the user’s password). This takes them to another screen which asks if they want to try the password before the current one. The attacker instead chooses Try another way.

Then Gmail offers the attacker (thinking it is the legitimate user) a handful of different ways to recover the account, one of which is to send an SMS message with the recovery code.

The victim gets the recovery code.

And then types it back in response to the attacker’s initial message.

It is game over! The attacker uses the recovery code to take over the account.

Spoofed Recoveries

But here are even easier SMS recovery hacks. In these instances, the attacker determines ahead of time which of the victim’s accounts allow SMS recovery which sends a recovery PIN without a lot of identifying information. This would describe probably half of all SMS recovery messages. The user sees a PIN number arriving from some random short message number.

Then the attackers send any message they like in order to trick the user into accepting the recovery code, except the victim does not know it is a recovery code. They just think the person contacting them currently is sending them a PIN verification code for the current transaction. The attacker can use any social engineering scheme. Here are some examples:

Asking the victim to type in YES to approve just adds to the fraudulent validity of the message. I mean, why would a hacker ever ask permission? Here are some other social engineering schemes I just thought up off the top of my head. They are both going to trick a lot of users.

Let’s say that the PIN recovery message is branded (meaning contains the legitimate service’s name). All the attacker has to do is say that they use Gmail or Microsoft Office 365, or whatever brand they are impersonating, as part of their authentication scheme. It is really not that hard to do. All SMS-based MFA can be stolen, hijacked, MitM’d and impersonated, far too easily.

Phishing Pushed-Based MFA

With push-based MFA, when you are logging onto a website, a related service “pushes” a login approval message to you. You click on “Approve”, “Yes” or something like that to get logged in. Or choose the other option to prevent the login. It is pretty easy to use and fairly secure. See an example push-based approval notice below.

Push-based authentication is used by all sorts of popular vendors, like Google (shown above), Amazon and Microsoft.

Turns out end users frequently approve logins that they are not initiating. It is a very common problem in an environment where pushed-based authentication has been implemented. Users are approving malicious logins…many times when the user is not anywhere near their computer. They are home, watching a TV program, eating dinner or whatever, when a push-based approval notice comes in – and they approve it.

The first time I heard of this issue was from a Midwest CEO. His organization had been hit by ransomware to the tune of $10M. Operationally, they were still recovering nearly a year later. And, embarrassingly, it was his most trusted VP who let the attackers in. It turns out that the VP had approved over 10 different push-based messages for logins that he was not involved in. When the VP was asked why he approved logins for logins he was not actually doing, his response was, “They (IT) told me that I needed to click on Approve when the message appeared!”

And there you have it in a nutshell. The VP did not understand the importance (“the WHY”) of why it was so important to ONLY approve logins that they were participating in. Perhaps they were told this. But there is a good chance that IT, when implementing the new push-based MFA, instructed them as to what they needed to do to successfully log in, but failed to mention what they needed to do when they were not logging in if the same message arrived. Most likely, IT assumed that anyone would naturally understand that it also meant not approving unexpected, unexplained logins. Did the end user get trained as to what to do when an unexpected login arrived? Were they told to click on “Deny” and to contact IT Help Desk to report the active intrusion?

Or was the person told the correct instructions for both approving and denying and it just did not take? We all have busy lives. We all have too much to do. Perhaps the importance of the last part of the instructions just did not sink in. We can think we hear and not really hear. We can hear and still not care. This is one of the core points of Perry Carpenter’s book, Transformational Security Awareness: What Neuroscientists, Storytellers, and Marketers Can Teach Us About Driving Secure Behaviors.

Many professional pen testers tell the same story. They all state how often users in environments with push-based MFA are approving their “malicious” login attempts. One pen testing team leader told me, “When we hear that a client has push-based notification, we literally start laughing. You cannot help it. We know what we are going to do and how we are going to get in. I would say three out of five people we test just approve our logins. It is like hitting all the buttons on an apartment building buzzer console to see which random people will buzz you through the locked security door because someone’s always waiting for someone. There are always people who will just click “Yes” because they have been trained to click on “Yes” and the whole reason of when they should click on “Yes” and “No” has become a forgotten distraction. It makes our jobs easy.” Most of the penetration teams say that it is so easy to break in using push-based MFA that they would not recommend it to anyone. The U.S. government agrees. And that is a problem when our largest vendors, including Google and Microsoft, are recommending to everyone as the way to solve the world’s hacking problems.

Government Does Define Acceptable MFA

The U.S. government does not hate or think all MFA is easily phishable. In the same zero trust executive order, it states, “This requirement for phishing-resistant protocols is necessitated by the reality that enterprise users are among the most valuable targets for phishing, but can be given phishing-resistant tokens, such as Personal Identity Verification (PIV) cards, and be trained in their use. For many agency systems, PIV or derived PIV will be the simplest way to support this requirement. However, agencies’ highest priority should be to rapidly implement a requirement for phishing-resistant verifiers, whether this is PIV or an alternative method, such as WebAuthn.”

The U.S. government has strongly supported the use of PIV cards for decades. Learn more about PIV cards here and here. I worked with a very common U.S. government PIV implementation known as CAC cards. Most government employees must insert one in their smart card reader on their government issued computer and input a PIN to log into their government device, use the network or run any CAC-protected application. The U.S. government is also big on biometrics (although they have plenty of their own issues). WebAuthn is a passwordless authentication standard that any MFA solution can support. It is already very closely affiliated with FIDO2 solutions.

Phishable MFA Must Evolve

So, what does the government’s stance on overly phishable MFA mean for the rest of us? For one, most MFA is overly susceptible to phishing. It is so overly phishable that it really does not provide as much protection as most organizations and users think. We need to change that.

Every MFA solution needs to be security reviewed and the common ways that attackers can bypass and phish around them need to be identified and remediated. We should not accept or use any MFA solution that can be easily bypassed by a phishing email containing a rogue link. MFA was supposed to stop easy phishing from working in the first place. But it is not true for most MFA solutions.

Phishing-susceptible MFA solutions need to be redesigned to prevent easy phishing. It can be done. How it can be done is dependent on the type of solution. Most of the phishing can be defeated by doing something like FIDO2-compliant MFA devices do by requiring pre-registration to the involved legitimate websites by the device to the site and the site to the device. That way, if a user gets tricked into visiting a fake website, their MFA solution will simply not work and not provide any authentication information or codes.

Educate Everyone

But it is going to take a long time for most MFA methods to be modified to prevent phishing. Every stakeholder in any MFA solution should be educated about the hacker methods that could be used to hack and bypass their particular MFA solution. Vendors should provide threat modeling to potential customers. Everyone – buyers, senior management, sysadmins, users – should understand how their MFA solution can be hacked and bypassed, and instructed how to prevent it. When you have push-based MFA users approving logins they are not involved in, you know there is a lack of education. We do not let our end users use passwords on our systems without giving them warnings and education (e.g., “Do not share your password with anyone else”, “We will never ask for your password in an email”, “Your password should be long and contain some complexity, and never be shared with any other website or service”, and so on). We just need to do the same with MFA users, especially until our MFA solutions become less phishable.

Relevant MFA Links

41-page Hacking MFA ebook, covers 20 ways that MFA can be hacked, was the beginning source material for the larger Hacking Multifactor Authentication book

Book

Hacking Multi-factor Authentication book (Wiley)

Book discussing over 50 ways to hack various MFA solutions. Starts with pointing out the strengths and weaknesses of passwords, details how authentication works, covers all the various MFA methods and how to hack and protect them, and ends with telling the reader how to pick the best MFA solution for them and their organization.

Webinar

One-hour webinar on how to hack various MFA solutions

MFA Assessment Tool

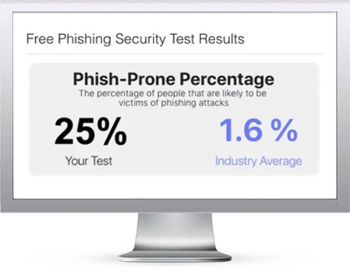

Free, Multifactor Authentication Security Assessment tool

Answer a dozen or so answers to correctly describe your current MFA solution (or one you are considering) and get back a report detailing all the ways it could be hacked

Here's how it works:

Here's how it works: